Confession

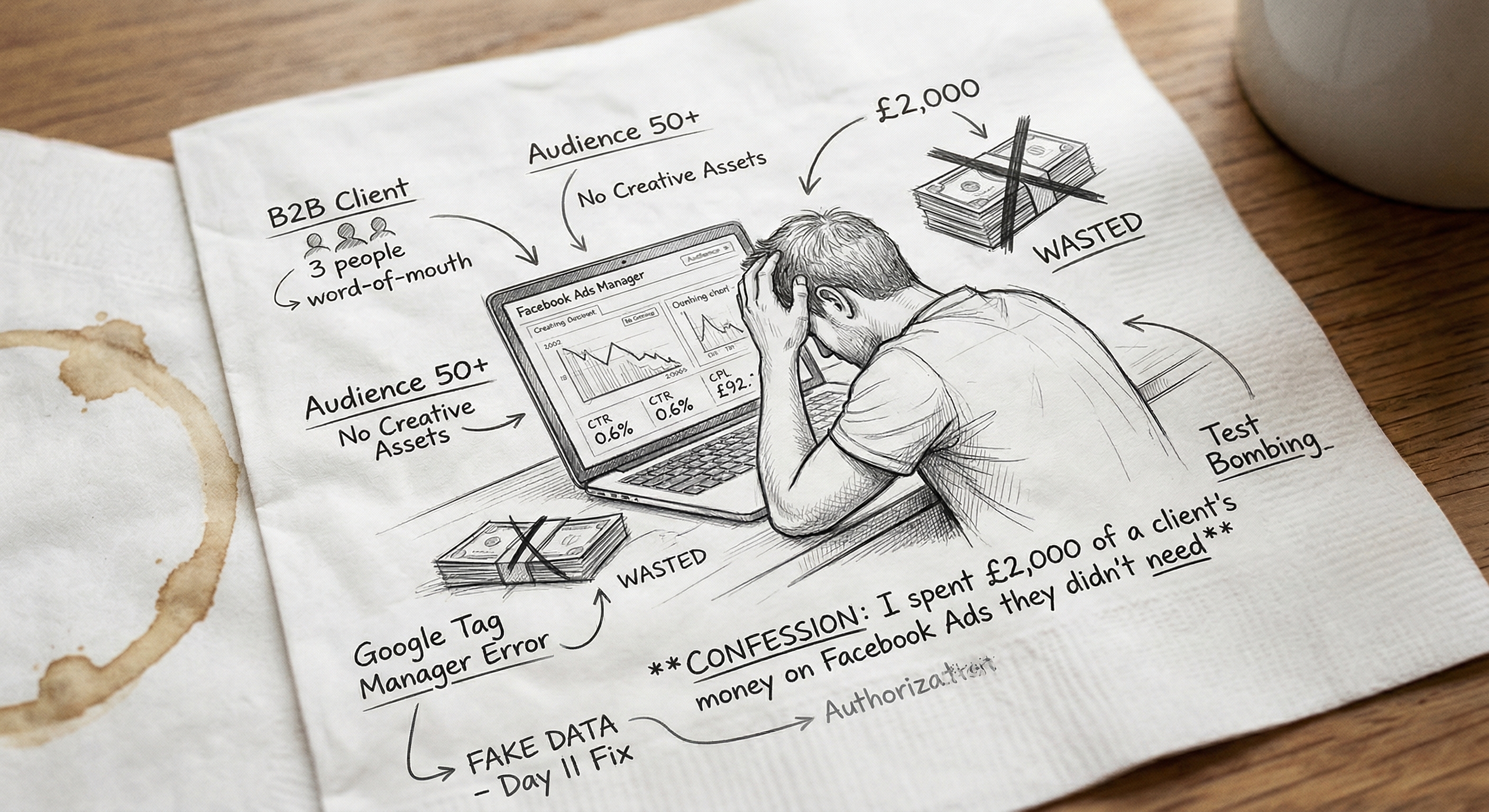

I spent £2,000 of a client's money on Facebook Ads they didn't need

An honest post-mortem on a paid social campaign that looked good in the pitch deck and bombed in practice. What went wrong, what I should have said in week one, and what I'd do differently.

The campaign had been running for 28 days when I finally admitted it to myself. I was sitting in front of Facebook Ads Manager at 11pm on a Tuesday, staring at a CPM that hadn’t moved, a CTR of 0.6%, and a grand total of two leads — both of whom turned out to be students doing a research project. The client had spent £2,000. They were locked into another month’s spend if I didn’t push back.

I should have said something in week one. I’m going to tell you why I didn’t.

The setup

The client was a small B2B services business — three people, a few hundred grand in revenue, mostly word-of-mouth and the occasional referral from a partner. The founder had been to a marketing conference and come back convinced they needed to “do paid social.” A friend of a friend introduced us. I did the discovery call, which took forty minutes, and at the end of it I knew three things:

- Their average customer was 50+, mostly came through referral, and had a sales cycle of 4–8 weeks.

- They had no creative assets that didn’t look like a 2014 PowerPoint slide.

- They were excited and they had a budget.

Two of those things should have stopped me. The third one didn’t.

The pitch I shouldn’t have agreed to

I told them honestly that Facebook wasn’t where their audience hung out in buying mode. I said it would be hard. I also said — and this is the part I’d take back — that we could “test the channel” with a £3,000 spend across six weeks and see what happened.

That sentence was the whole problem. When you say “test the channel” to a client who’s never run paid before, what they hear is “we’ll spend a bit and find out.” What you mean is “I have low confidence and I want to protect myself if it doesn’t work.” Both of you walk away feeling like you have a reasonable plan. Neither of you do.

Real testing requires a hypothesis. I didn’t have one. I had a vibe.

What we actually built

Here’s what was running by week one:

- Three audiences. A lookalike of the client’s existing customer list (about 200 people uploaded — already too small to make a useful lookalike, which I knew and ignored). A broad interest stack on the founder’s industry. A retargeting pool of website visitors, which at the time was about 800 people a month, also too small.

- Five creative variants. Two static images, two short videos (15s, 30s), one carousel. The images and videos were made from existing brochure assets, which means they looked like brochure assets. The carousel was the strongest of the five and still wasn’t good.

- Two landing pages. One generic services page, one a “free consultation” form. Both built in the client’s existing website, neither set up to track properly until day eleven, which I’ll get to.

- A budget split of roughly £40/day across the three audiences, weighted toward the lookalike.

For the first two weeks I ran the standard playbook. Let it spend. Don’t kill anything before you have data. Look at frequency, look at relevance, watch the cost per result trend. By day fourteen the picture was unambiguous. The lookalike was the best performer, which sounds good until you realise “best” meant a CPL of £92 against a target of £40. The interest stack was costing £160 a lead. The retargeting was generating impressions and clicks that turned out to be the founder, his cofounder, and me.

The bit I really don’t want to write

On day eleven I noticed that the conversion event on the landing page was firing on every page load, not just on form submissions. Someone — fine, me — had set it up wrong in Google Tag Manager when we were rushing to get live. The first ten days of “results” were fake. The cost per result had been £8 for the first week not because the campaign was working but because we were counting page views as conversions.

I fixed it. I told the client. I framed it as “we now have clean data to work with,” which is true and also the thing you say when you’re burying a screw-up. They were fine about it. They shouldn’t have been.

That mistake cost about £400 of their money. Not because the spend was wrong — the ads ran fine — but because for ten days I was making decisions on garbage. I increased the budget on the wrong audience based on the fake data. I killed a creative variant that was actually performing because the dashboard told me it wasn’t.

Always check the conversion firing. Always check it the day it goes live, before you turn on spend. I knew this. I didn’t do it. Mostly because I was excited to launch.

Why it bombed (and it would have bombed even with clean data)

Here’s the part nobody tells you about paid social for the wrong fit. The campaign mechanics were fine. The creative wasn’t great but it was acceptable. The targeting was reasonable given the constraints. None of that mattered, because the people who buy this client’s service do not make that decision while scrolling Facebook on their phone in the evening. They make it after a referral, after a phone call, after a meeting. The buying moment happens nowhere near the platform you’re advertising on.

You can run beautiful Facebook campaigns at audiences that aren’t in a buying mindset and burn unlimited money doing it. The dashboards will all light up. CTR will look reasonable. CPM will look reasonable. The frequency will creep up nicely. And the leads will be junk, because the people clicking are curious, not buying.

I knew this on the discovery call. I had a felt sense of it on the kickoff. By week two I was certain. I kept the campaign running because I’d told the client we’d run a six-week test and I was scared that telling them to stop would make me look like I’d wasted their money. Which, by then, I had.

I kept the campaign running because telling them to stop felt like admitting I’d wasted their money. The longer I waited, the more I wasted.

What I should have done in week one

The honest version of the conversation I should have had on day eight:

“The early data isn’t there. I can keep this running and we can spend the rest of the budget to be sure, but I’m 80% confident this channel isn’t going to work for the kind of customer you sell to. I think we should stop, refund what we haven’t spent, and put the remaining budget into something that’s a better fit — probably warming up your existing referral partners with a small piece of content they can share, or a LinkedIn outbound effort targeting the specific roles you sell to. Both of those will feel slower. They’ll also work.”

I didn’t say this because I was protecting myself, not the client. Saying it would have felt like admitting failure. Not saying it cost them another £1,200 and cost me the relationship six months later when they reasonably concluded I hadn’t earned my retainer.

What I’d do differently

I’m going to be blunt about this because it’s the only useful part:

Don’t run “tests” you don’t believe in. A test is a real bet on a hypothesis you can’t prove without spending the money. It is not a way of looking like you’re being responsible while you wait for the inevitable. If you don’t think a channel will work, say so. If you can’t say so, don’t take the work.

Set a kill criterion before you start. Write down on the kickoff call: “If by day X we have not seen Y, we stop and reallocate.” Show it to the client. The kill criterion forces you to be honest with yourself, and it gives the client a clean off-ramp that doesn’t feel like blame.

Check your tracking on day zero, not day eleven. This is the boring one. Everyone says it. Everyone keeps screwing it up. Set up the conversion event, fire it, check it lands, then turn on spend.

Stop charging clients to find out things you already know. Most of the time, when a small business asks “should we be doing Facebook ads?” the honest answer is “probably not, here’s the channel I’d start with instead.” The version of me that took this engagement was the version that needed the cash and didn’t want to seem difficult. I learned to say no more often. I get fewer projects now and more of them work.

If you’ve spent money on a paid campaign you knew in your gut wasn’t going to work, you’re not alone, and writing about it is much cheaper than running it. Reading about it is even cheaper, which is the whole reason this site exists. If you want a piece of the same shape from the opposite angle, the marketing tools I actually use vs. the ones I recommend is in the same key. If you’d rather read something with a sharper opinion, stop A/B testing your button colours is a good one to follow this with.

The £2,000 isn’t coming back. The lesson cost about that much. I’m hoping this piece makes it cheaper for somebody else.